Resources

Here we provide the Datasets, Source Codes and useful links. Please contact us for any questions.

Datasets

2024.03 We are regret to announce that the collected talking videos will be withdrawed and cannot be used for model training anymore. The applicant and researchers who have already accessed to the dataset shall follow the agreement and avoid using, publishing, retransmiting, copying, reproducing or redistributing the dataset for both non-commercial research and educational purposes under any condition.

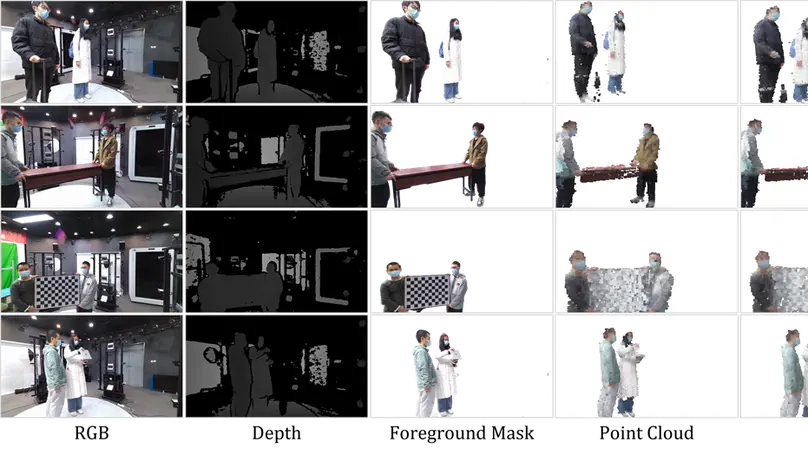

Introduction The people-object interaction dataset comprises 38 series of 30-view multi-person or single-person RGB-D video sequences, complemented by corresponding camera parameters, foreground masks, SMPL models and some point clouds, mesh files.

Introduction Our free viewpoint RGB-D video dataset is a dynamic, synchronous and close to practical RGB-D HD free viewpoint synthesis dataset. Our dataset consists of 14 groups of video sequences, including 13 groups of human motion video sequences such as dancing, playing basketball and playing football, and 1 group of empty scene video sequences, as shown below.

Ultra-high resolution 8K 360-degree immersive video, generally 8192 x 4096. It is still an emerging technology, so there remain a great number of challenges need to be solved. Video sequences play an important role in the corresponding researches.

Introduction In the past two years, the standards of UHD parameters and HEVC have been approved sequentially by ITU. However, the subjective evaluation on ultra-high-definition video, such as 4K, stills in the preliminary stages for that the uncompressed 4K video data is short, let alone the 4K video quality database.

The photographic apparatus adopted to produce our HDR dataset was of prudent contemplation. The Sony F65 and F55 cameras were chosen to generate all the UHD HDR video sequences. The raw data of the videos which were recorded in Sony RAW 16bit MAF format containing a dynamic range of more than 14 stops.